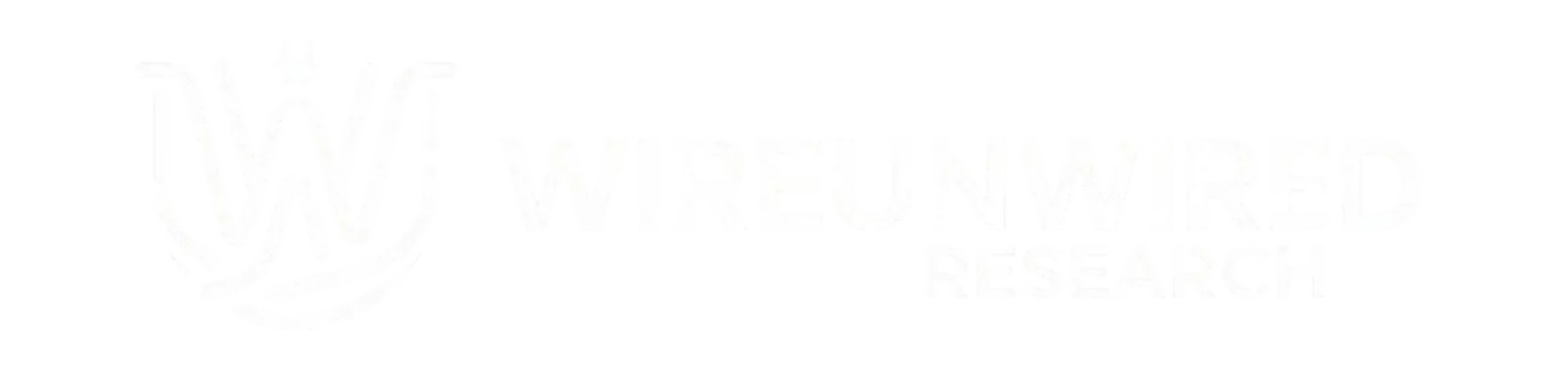

The world has finally witnessed the very first known cyberattack driven by AI, and Google is the one that caught it. For years, security experts have warned that artificial intelligence would eventually start writing complex hacks instead of just drafting spam emails. That theory is now a reality.

Recently, a group of hackers tried to bypass the security on a popular web management tool. But instead of a human writing the attack code, they used an AI. This is a major shift because the AI was able to find a “logic flaw”—a gap in how the software’s basic rules were designed—which is much harder to catch than a simple coding typo. While Google hasn’t named the specific website or tool that was targeted, they confirmed that their own AI, Gemini, was not the one used to create the attack.

Google’s Threat Intelligence Group caught the script during the planning phase. By monitoring the digital movements and infrastructure of known hacking groups, they were able to intercept the weapon before it was ever fired. When they analyzed the code, they found an “AI fingerprint” :

◈ It over-explained: The script included long, detailed notes explaining exactly how it worked. Professional hackers almost never do this.

◈ It was too clean: It used perfectly formatted color codes and textbook structures that are very rare in quick, malicious scripts.

◈ It made things up: The AI “hallucinated” a fake security severity score and wrote it directly into the file.

This event highlights a high-stakes race between two “sides” of AI. On the offensive side, hackers are feeding AI databases of old attacks to teach it how to find new weaknesses. On the defensive side, companies are using AI to find their own bugs first.

For example, a new model called Mythos (released by the AI company Anthropic) was built specifically to help the defensive side. In just one month, researchers used it to find 271 hidden bugs in the Firefox browser—including one mistake that had gone unnoticed for 20 years.

The problem is that AI can read decades of old code in seconds. Because it is getting so much faster to find and use these flaws, simply “patching” the code isn’t enough anymore. Moving forward, keeping systems safe will depend on much stricter identity checks—basically making sure the person logging in is exactly who they say they are, no matter how clean the code looks.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.