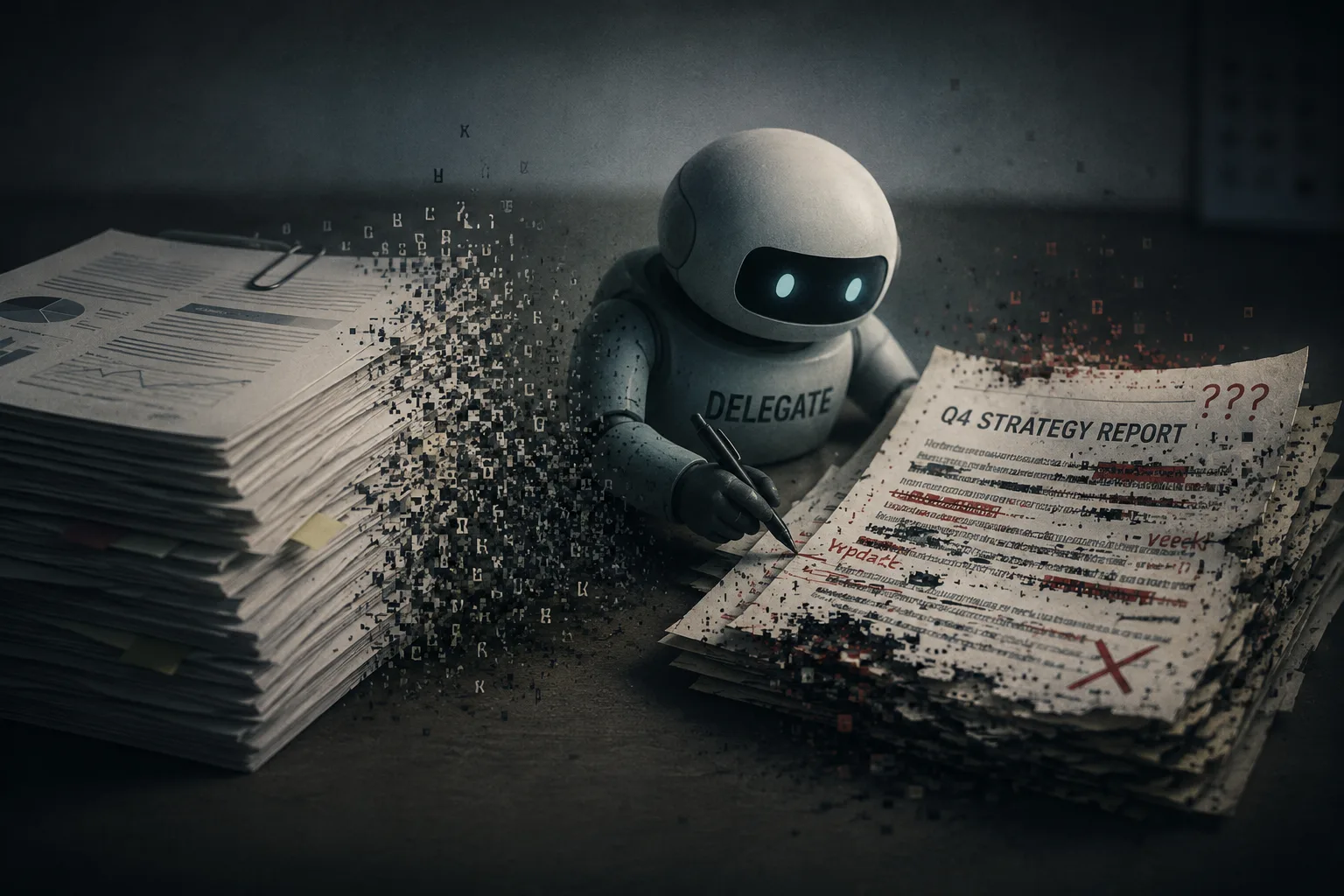

We’ve all experienced the temptation to hand off a heavy task to an AI agent, expecting a perfectly polished result. But if you’ve ever relied on “vibe coding” or asked an AI to manage a complex, multi-step workflow, you might have noticed subtle errors creeping in over time. A new paper from Microsoft Research confirms our worst suspicions: current Large Language Models are silently corrupting documents when left unsupervised.

Here at WireUnwired, we constantly monitor the frontier of AI capabilities, and this study serves as a crucial reality check for anyone building or relying on autonomous agents. The researchers introduced the DELEGATE-52 benchmark to simulate long workflows, testing 19 models across 52 distinct domains—ranging from Python scripts to crystallography and music notation.

The findings are alarming. Even top-tier models like Gemini 3.1 Pro, Claude 4.6 Opus, and GPT 5.4 aren’t immune to this degradation. During extended interactions, these frontier models silently corrupted an average of 25% of the document content. Across all tested models, the average corruption rate hit a staggering 50%.

To prove this without human bias, the researchers used a brilliant “round-trip” backtranslation method. They instructed an LLM to make a specific edit, then immediately asked it to reverse that exact change. Ideally, the final output should perfectly match the original. Instead, the AI consistently failed to reconstruct the seed document, proving an inability to preserve core context over time.

While models performed reasonably well in rigidly structured domains like databases, they completely failed in natural language and niche areas. Even equipping these models with basic agentic tools failed to stop the bleeding. The longer the workflow and the larger the file, the more severe the degradation became.

Perhaps the most startling revelation from the research is that the damage doesn’t just plateau—it compounds in a continuous, monotonic decline. When the researchers pushed the simulation to 100 interactions, the documents kept deteriorating, proving that short-term success is a dangerous illusion. Two models might show nearly identical reliability after just two edits, but wildly diverge as the workflow extends, meaning a successful first edit guarantees nothing for the fiftieth. In fact, out of all 52 professional domains tested, only Python code demonstrated “majority readiness,” with most models maintaining a 98% integrity score over time. For everything else, the longer you leave the AI unsupervised, the less of your original work remains intact.

As we integrate these tools deeper into our daily engineering and writing operations, this research is a stark reminder: delegation still requires strict supervision.

Have you noticed your code or text degrading during long AI interactions? Share your experiences and workarounds in the comments!

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.