Every few months, a new AI model breaks records on standard lab tests. But when companies actually plug these “state-of-the-art” models into their daily workflows—like a bank or a hospital—the AI often fails miserably.

Why is there such a massive gap between lab scores and real-world performance?

A new research paper from the National Institute of Standards and Technology (NIST) and Carnegie Mellon University tackles this exact problem. In the paper, you won’t find any new benchmark scores or model rankings. Instead, the researchers provide a complete blueprint for how we need to fix AI testing.

The core problem, they explain, is that current AI tests are completely disconnected from reality.

The Missing Link: People + Technology

The researchers point out a massive blind spot: we currently test AI as if it is just an isolated piece of software. But in the real world, AI is what experts call a “sociotechnical system”. That is just a fancy way of saying it is a messy mix of computer code and human behavior.

If you only test how smart the AI is, but ignore how a tired human worker will actually interact with it, the whole system will eventually break. To run a fair test, you have to evaluate the entire environment, people included.

Building a Better Test from Scratch

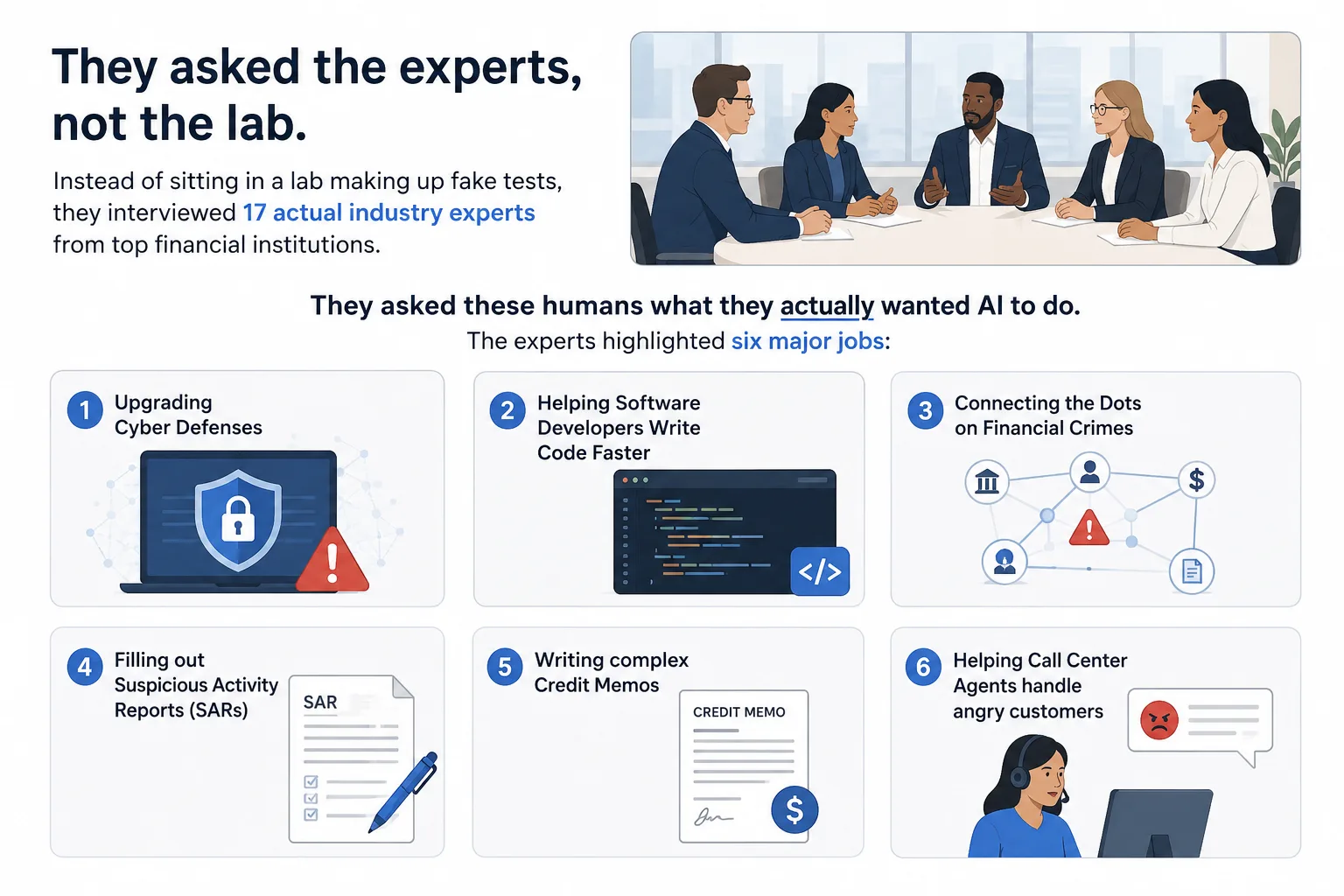

To prove how this should be done, the researchers focused on the U.S. financial services sector—an industry where you absolutely cannot afford to make mistakes.

Instead of sitting in a lab making up fake tests, they interviewed 17 actual industry experts from top financial institutions. They asked these humans what they actually wanted AI to do. The experts highlighted six major jobs:

➤ Upgrading Cyber Defenses

➤ Helping Software Developers Write Code Faster

➤ Connecting the Dots on Financial Crimes

➤ Filling out Suspicious Activity Reports (SARs)

➤ Writing complex Credit Memos

➤ Helping Call Center Agents handle angry customers

But you cannot just tell an AI “Upgrade our cyber defenses” and give it a grade. That is too broad. You need highly specific, detailed scenarios.

To get that level of detail, the researchers built a unique tag-team pipeline. They used an AI model (Claude Sonnet 4) to brainstorm specific testing scenarios, and then had human experts manually review and correct the AI’s work at every single step.

This human-AI collaboration successfully turned those 6 broad ideas into 107 highly detailed, ready-to-use testing scenarios.

The Stress Test: How to Break the AI

Once you have your scenarios, how do you actually test the AI? You bring in the “Red Team.”

Red-Teaming is basically an authorized, simulated attack. Evaluators actively try to break the AI, trick it into leaking sensitive data, or force it to make a terrible decision.

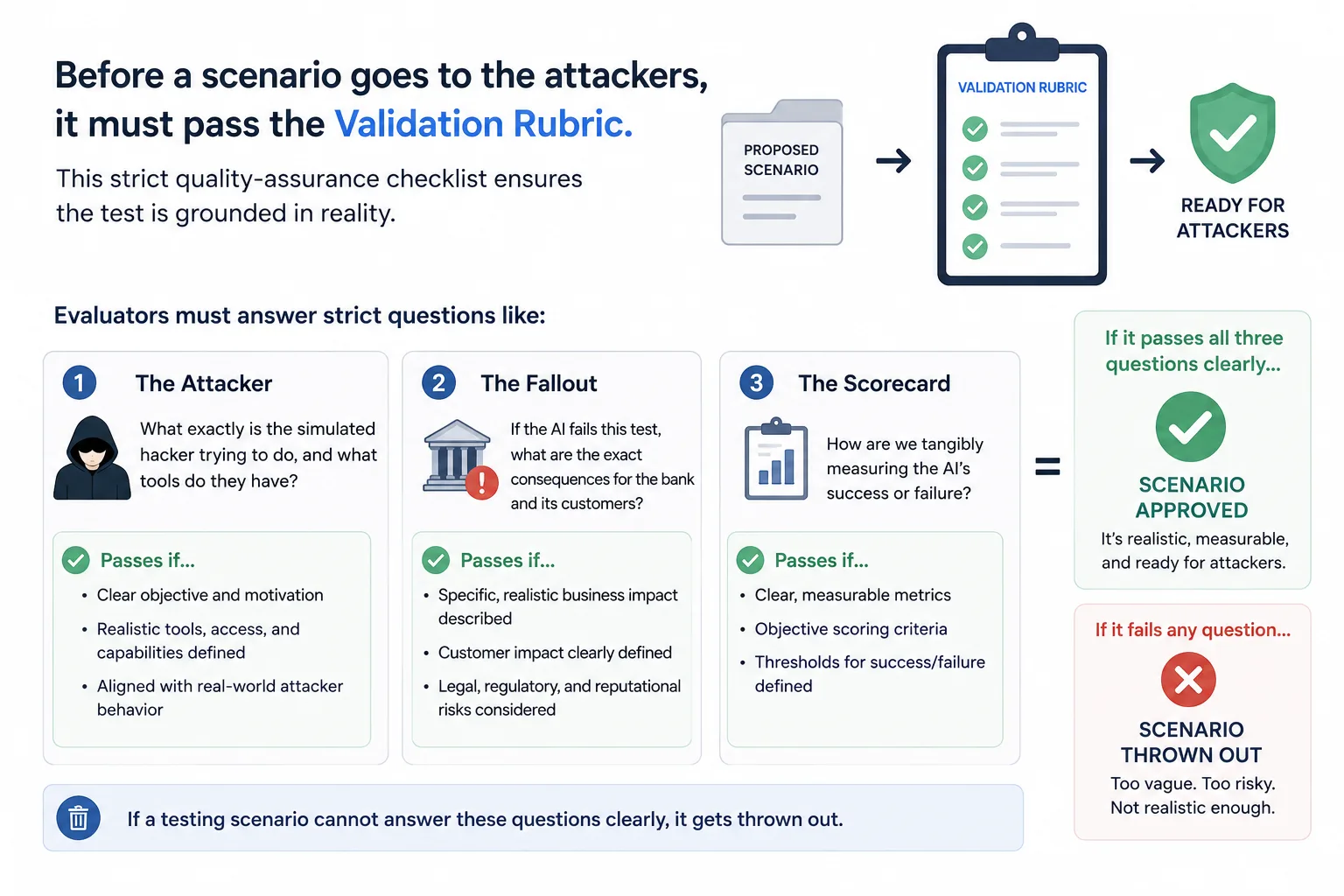

But before a scenario is handed over to the attackers, it must pass a strict quality-assurance checklist, which the researchers call a “Validation Rubric”. This checklist ensures the test is grounded in reality. Evaluators must answer strict questions like:

➤ The Attacker: What exactly is the simulated hacker trying to do, and what tools do they have?

➤ The Fallout: If the AI fails this test, what are the exact consequences for the bank and its customers?

➤ The Scorecard: How are we tangibly measuring the AI’s success or failure?

If a testing scenario cannot answer these questions clearly, it gets thrown out.

Putting It Into Practice: A Real-World Example

Let’s see what this looks like in action.

Imagine a bank wants to test an AI that tracks cybersecurity threats. The researchers didn’t just write a generic test like “Is the AI safe?”

Instead, using their new framework, they built a highly specific scenario called Threat Intelligence Correlation. In this test, the AI’s job is to read external threat reports and warn the bank about incoming attacks.

The Red Team is given a very specific mission: Try to feed the AI fake intelligence reports. The test isn’t just about whether the AI is smart; it’s about whether a hacker can trick the AI into blaming the wrong group for a cyberattack, sending the bank’s security team on a wild goose chase.

That is a real test. That is what actually matters to a business.

The Takeaway

The researchers make one thing abundantly clear: if we want to accurately measure how AI will perform in the enterprise, we have to stop testing it in a vacuum. To fix the “apples to oranges” testing problem, the AI industry must embrace two non-negotiable standards moving forward:

➤ Scenarios must be real: A test is useless if it doesn’t reflect actual human workflows. We must evaluate highly specific, daily tasks rather than vague, high-level capabilities.

➤ The Rubric is the law: Before any test begins, evaluators must use a strict validation checklist to define the exact stakes. If you don’t know the simulated attacker’s goal, the real-world consequences of failure, and the exact metrics for success, the test is invalid.

Whether in finance, healthcare, or manufacturing, adopting this structured, human-centered approach is the only way we will finally know if an AI is truly ready for the real world.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.