For years, the AI hardware race revolved around a simple goal: build the fastest accelerator.

More TOPS. Higher efficiency. Better utilization. Lower power consumption.

The assumption was straightforward: if one processor could execute AI workloads faster and more efficiently than everyone else, it would dominate the future of AI computing.

That assumption is beginning to break down.

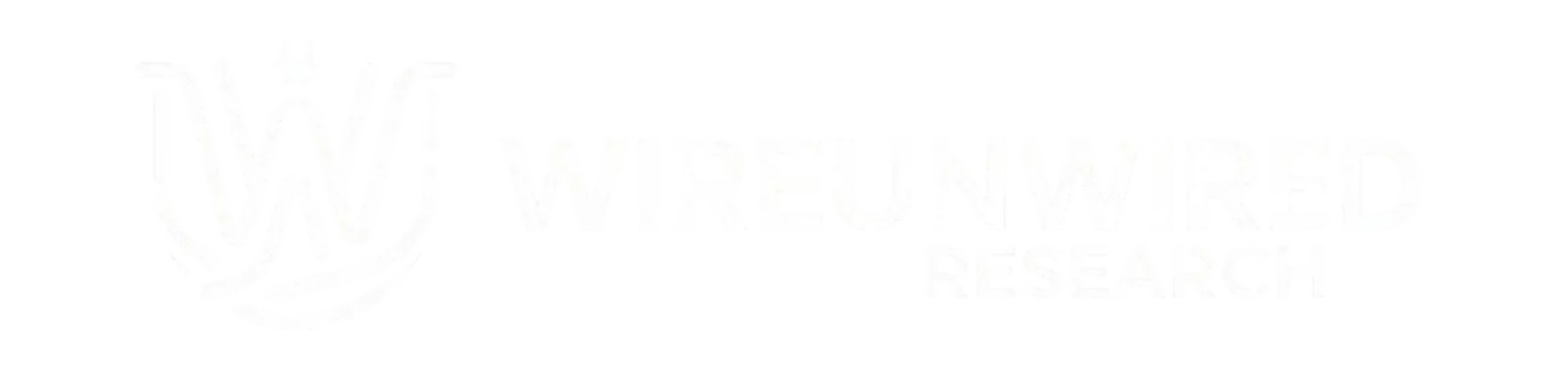

The AI industry spent years searching for the best accelerator. But modern AI workloads are becoming so diverse that the idea of a universally “best” AI chip is starting to lose meaning.

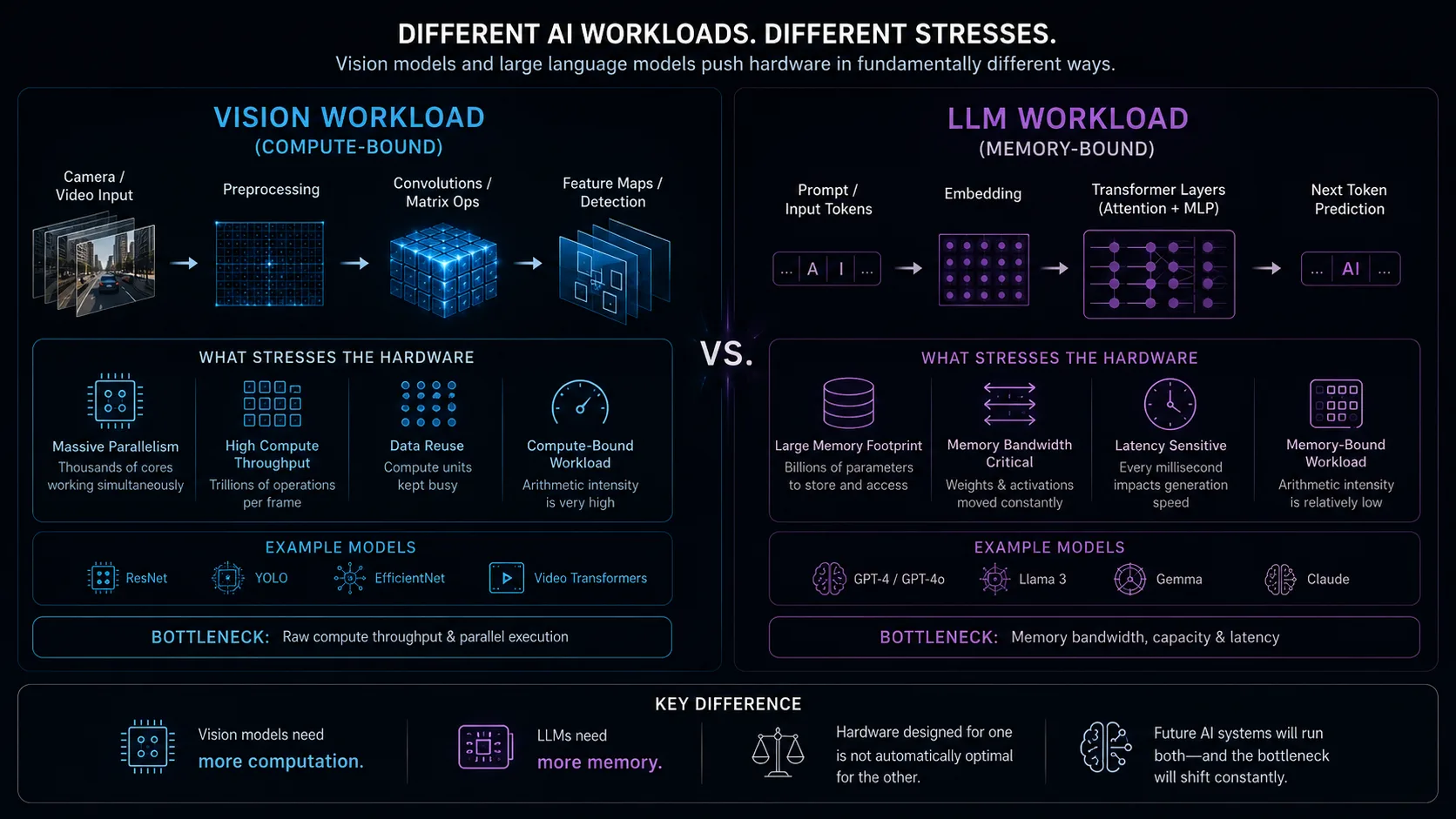

A computer vision model processing 4K video stresses hardware very differently from a large language model generating tokens in real time. Vision workloads are often heavily compute-bound, relying on massive parallel matrix operations across millions of pixels per frame. Large language models, by contrast, are frequently memory-bound, where the challenge is less about raw computation and more about continuously streaming enormous amounts of model weights and contextual data through memory fast enough to sustain token generation.

As AI systems become multimodal and increasingly agentic, those computational behaviors begin to overlap inside the same application. A vision-language-action model may process images, interpret text, retain context, coordinate smaller models, and make decisions simultaneously. The bottleneck can shift dynamically between compute throughput, memory bandwidth, latency, synchronization overhead, orchestration, and data movement depending on which stage of execution dominates at a given moment.

That shift is becoming one of the defining problems of modern AI architecture.

Historically, accelerators achieved efficiency by optimizing around relatively stable assumptions about workloads. But AI workloads are now evolving faster than silicon design cycles themselves. New operators, model structures, memory patterns, and inference behaviors appear continuously, making it increasingly difficult for any single architecture to remain universally optimal.

As Steve Roddy, Corporate Vice President of Qualcomm’s Edge AI and Computing business, explains, earlier edge AI systems were dominated by relatively fixed vision tasks. Modern AI applications now combine multiple forms of computation inside the same system, forcing hardware to support a far broader range of behaviors than previous generations ever required.

The consequence is a growing move toward heterogeneous architectures.

Instead of relying on a single processor type, modern AI systems increasingly distribute workloads across multiple compute engines, each optimized for different computational patterns. CPUs handle orchestration, scheduling, and flexible control flow. GPUs provide massively parallel compute for scalable workloads. NPUs deliver efficient neural inference under constrained power budgets. DSPs often process low-power sensor, audio, and signal-processing pipelines close to the edge.

The goal is no longer to force every workload onto one architecture. It is to move workloads intelligently between architectures depending on what each stage of computation requires most efficiently.

That shift becomes especially visible in real-world AI systems. An autonomous vehicle, for example, may simultaneously run vision models on GPUs, low-latency sensor fusion on DSPs, planning and coordination tasks on CPUs, and transformer inference on dedicated AI accelerators—all while operating under strict real-time power, latency, and thermal constraints.

Increasingly, AI hardware behaves less like a standalone processor and more like a coordinated computing ecosystem.

This transition is already visible across the industry.

NVIDIA’s dominance, for example, is no longer explained purely by raw GPU performance. Its larger advantage comes from CUDA and the surrounding software ecosystem, which allowed developers to treat increasingly complex hardware as a relatively unified computing platform. That flexibility helped NVIDIA adapt as AI workloads evolved from computer vision models to transformers and multimodal systems.

But the same industry shift is also pushing computing beyond purely GPU-centric architectures. Apple’s AI silicon combines CPUs, GPUs, NPUs, and memory into tightly integrated edge systems optimized for power efficiency. Qualcomm increasingly distributes AI workloads across NPUs, DSPs, CPUs, and cloud-connected systems for mobile and wearable devices. Google continues building TPUs optimized around hyperscale AI infrastructure, while AMD and Intel are both expanding heterogeneous compute strategies that combine multiple forms of acceleration inside broader system-level platforms.

The industry is no longer converging toward one ideal processor architecture.

It is fragmenting into increasingly specialized compute ecosystems coordinated through software.

That fragmentation, however, introduces a new problem: orchestration complexity.

Once workloads begin moving dynamically between CPUs, GPUs, NPUs, DSPs, edge devices, and cloud systems, the challenge is no longer simply accelerating AI computation. The real problem becomes coordinating computation, memory movement, synchronization, and scheduling efficiently across the entire system.

This is where software becomes central.

A model may begin execution on an NPU for dense neural inference, shift unsupported operations to a CPU, offload highly parallel stages to a GPU, and rely on DSPs for low-power sensor processing. Coordinating those transitions manually would be nearly impossible at scale.

Compilers, runtimes, and toolchains therefore become critical layers in modern AI architecture. They determine how models are partitioned across processors, how memory is managed between compute blocks, and how fallback paths behave when workloads exceed the capabilities of a particular accelerator.

Without that software layer, heterogeneous systems become fragmented collections of hardware rather than unified AI platforms.

But software orchestration does not eliminate complexity. In many ways, it is a response to growing hardware fragmentation. As architectures diversify, software increasingly becomes the layer responsible for hiding synchronization costs, portability problems, unsupported operators, memory inefficiencies, and scheduling overhead from developers.

In other words, software is not replacing hardware constraints. It is becoming the mechanism that makes increasingly fragmented hardware usable.

As Jason Lawley, Senior Director of Product Management at Qualcomm Technologies, points out, many customers care most about proprietary AI models that hardware vendors never encounter beforehand. That means AI systems must increasingly support unfamiliar workloads dynamically rather than relying entirely on pre-optimized benchmark models.

The hardware alone can no longer guarantee adaptability.

Specialized accelerators can achieve extraordinary efficiency because they optimize aggressively around known computational patterns. But the tighter those optimizations become, the harder architectures become to adapt as models evolve.

Flexible systems, on the other hand, can support broader classes of workloads and future models, though often at the cost of higher power consumption, larger silicon area, or lower peak efficiency.

Modern AI architecture is therefore becoming a balance between specialization and resilience. Systems must optimize aggressively enough to remain efficient, while remaining flexible enough to survive changing workloads and uncertain future models.

As Ronan Naughton, Vice President of Corporate Marketing at Edge Impulse, describes, AI workloads are increasingly being distributed across entire ecosystems rather than isolated accelerators. Lightweight inference may run locally on wearables or edge devices, while larger reasoning workloads shift dynamically to smartphones or cloud infrastructure depending on latency, power, and compute constraints.

That broader shift is changing the nature of AI competition itself and it would not be wrong to say :

The future of AI computing may not belong to the fastest individual processor, but to the systems that can coordinate the widest diversity of workloads efficiently as AI itself continues to evolve.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.