The whole world has gone crazy over ChatGPT’s new image editing capabilities, especially over their new Ghibli studio image. Approximately by now, roughly 100 million people would have made their Ghibli studio image, and if this article is reaching you, then probably you are also one of them.

Congratulations🎉.

But have how you ever thought since all this editing is being done by a GPU (Graphics Processing Unit), it must be consuming some power — but how much?

This article from WireUnwired is solely focused on the energy consumption aspect of generating Ghibli Studio images. Before we directly dive into the topic, it will be better if you are aware of some of the technical terms related to GPUs.

Quick GPU Glossary

| Term | Definition |

|---|---|

| GPU (Graphics Processing Unit) | A specialized processor designed to accelerate rendering of images and perform parallel computations — ideal for AI and deep learning workloads. |

| Tensor Core | A type of processing unit within modern NVIDIA GPUs (like the A100 or RTX 4090) specifically optimized for matrix operations used in AI training and inference. |

| CUDA (Compute Unified Device Architecture) | NVIDIA’s parallel computing platform and API that allows developers to use GPUs for general-purpose processing. |

| Inference | The process of using a trained AI model to generate an output (like a Ghibli-style image) based on new input data. It’s where the “magic” happens after training. |

| Throughput | A measure of how many operations a GPU can perform in a given time. Higher throughput means faster generation and processing. |

| Latency | The time it takes to generate a result (like an image) from the model. Lower latency means faster responses, important in real-time generation. |

| PUE (Power Usage Effectiveness) | A ratio that describes how efficiently a data center uses energy; calculated as total facility energy / energy used by IT equipment. A PUE of 1.3 means 30% overhead beyond compute power. |

Energy cost of AI-generated Ghibli studio images

Since OpenAI, the parent company of ChatGPT, has thousands of Nvidia GPUs, we will consider the ones which are most commonly used for AI workloads — probably the NVIDIA A100 or V100.

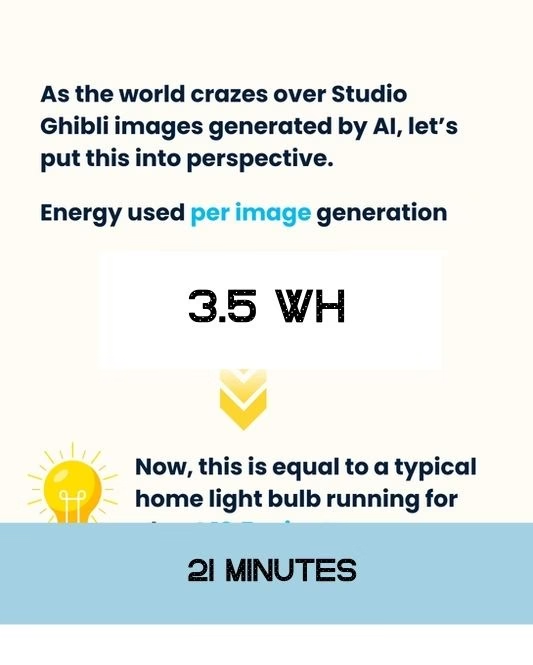

These GPUs consume about 250–400 watts of energy during inference, meaning literally every second they are doing some computations, they are consuming roughly 325 watts.

Assuming the inference occurs for around 30 seconds, then the energy consumption would come out as:

325 Watts × (30 / 3,600) hours = 2.7 watt-hours (Wh)

What about extra energy consumption?

Like energy needed to:

- Cool the GPUs

- Support other infrastructures

- Run auxiliary systems

Generally, the extra energy consumption comes out around 0.3 times the energy consumption of the GPUs.

Taking that into consideration, the new energy consumption would be:

2.7 × 1.3 = 3.5 Wh

Getting a Bigger Picture

Till now, what we saw was the energy consumption of generating a single AI Ghibli studio image. To have a bigger perspective of how much energy the world would have consumed till now to generate their own Ghibli studio images, we will take some considerations:

Population who have made AI-generated Ghibli studio images: 100 million

Images generated by person: 3

Let’s do the calculations:

3.5 × 3 × 100 million = 1,050 MWh

To grasp the scale of energy this is, let me tell you:

It’s equivalent to:

- The electricity a single home light bulb would consume if left on for 12,000 years

- The energy an electric car would need to drive around the Earth 1.75 times

- Enough power to keep a small town running for 1.4 years

Conclusion

Sure, AI-generated Ghibli images are magical, fun, and harmless at a glance — but there’s a real energy cost hidden beneath the surface. As AI tools become more popular, it’s worth considering not just their coolness, but their carbon footprint too 🌱.

Next time you generate an image, just remember — that’s 21 minutes of light, right there in pixels.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.