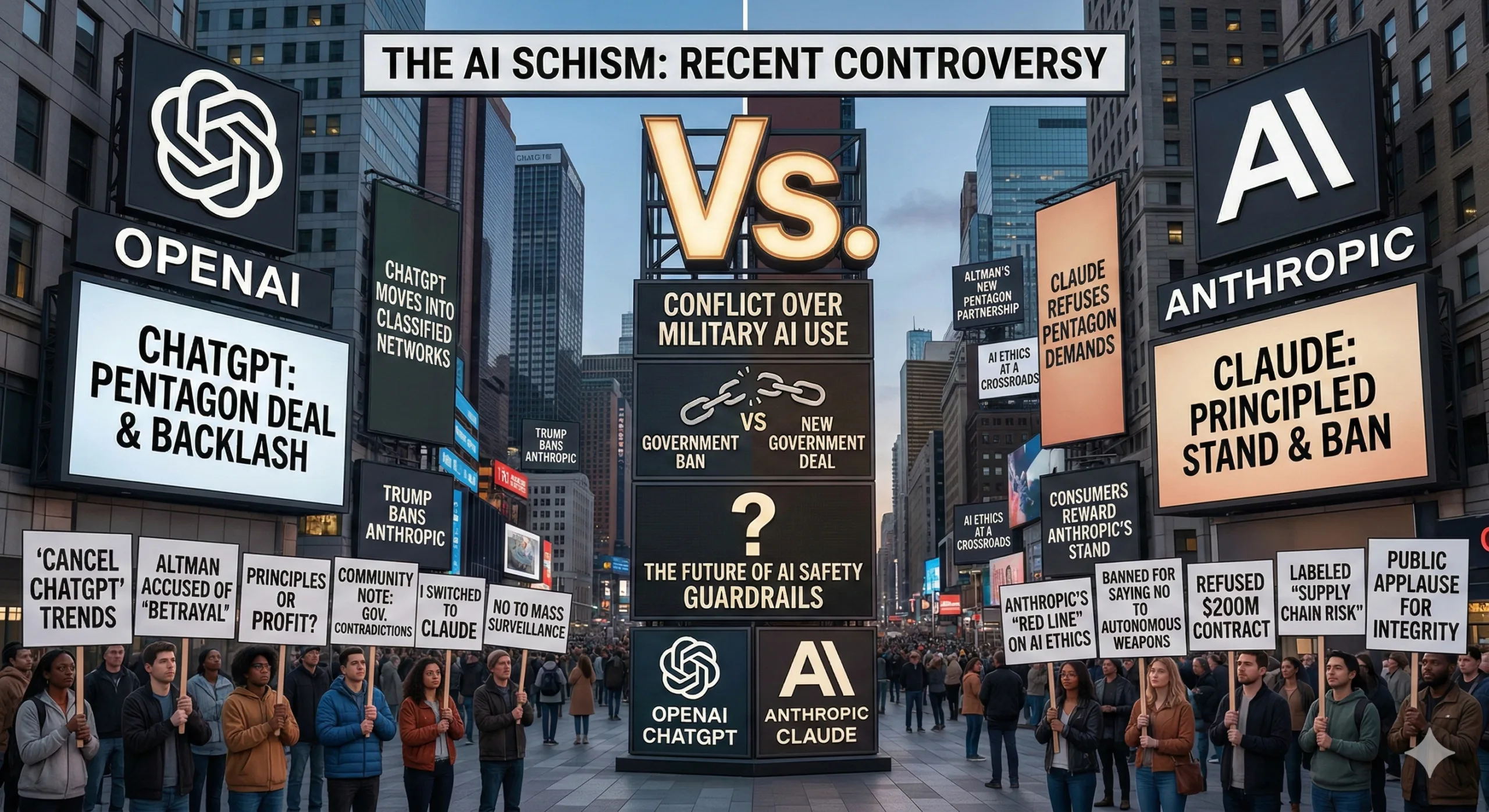

Two weeks ago, OpenAI signed a controversial deal allowing the Pentagon to use its AI in classified environments. Now, as the US escalates strikes against Iran with AI playing a larger role than ever before, the question isn’t whether OpenAI’s technology will be used in combat—it’s what exactly it will do when it gets there.

The technology isn’t deployed yet. Integration requires connecting OpenAI’s models to existing military infrastructure, security clearances, and extensive testing—a process taking months. But pressure is mounting. After Anthropic refused to allow its AI for “any lawful use,” President Trump ordered the military to stop using it and designated Anthropic a supply chain risk. OpenAI is positioned to fill that gap.

If the Iran conflict continues when OpenAI’s tech goes live, here’s what deployment looks like based on defense official conversations and announced partnerships.

Target Analysis and Strike Prioritization

A defense official described the scenario: A human analyst inputs potential targets into the AI model. The system analyzes logistics, satellite images, drone video, communications intercepts, and sensor data simultaneously. It identifies patterns humans might miss—maintenance vehicles near airfields at unusual times combined with increased communications traffic might elevate that airfield’s priority because it suggests imminent operations.

This builds on Project Maven, launched in 2017 to automatically analyze drone footage and flag potential targets. OpenAI’s models add a conversational layer on top. Instead of just receiving automated flags, analysts can ask: “Which airfields showed increased activity in the past 48 hours?” or “What logistical patterns suggest this facility is being used for drone assembly?” The model synthesizes Maven’s visual analysis with signals intelligence and historical patterns.

This represents a fundamental shift. AI has long done military analysis, drawing insights from massive datasets. But using generative AI’s advice about which actions to take—which targets to prioritize, which assets to deploy where—is being tested in earnest for the first time in Iran. The model isn’t just identifying what exists; it’s recommending what to do about it.

Counter-Drone Operations with Anduril

In late 2024, OpenAI partnered with Anduril for “time-sensitive analysis of drones attacking US forces.” An OpenAI spokesperson said this didn’t violate policies prohibiting “systems designed to harm others” because the technology targeted drones, not people—a distinction that drew immediate employee criticism.

Anduril’s counter-drone portfolio includes the Anvil interceptor (physically rams hostile drones) and electronic warfare systems that jam communications or GPS. Anduril has long trained its own AI to analyze camera footage and sensor data for threat identification. OpenAI adds conversational AI allowing soldiers to query systems in natural language: “Show me drone threats within 10 kilometers prioritized by likelihood of hostile intent.”

The stakes are concrete. Six US service members were killed in Kuwait on March 1 following an Iranian drone attack that US air defenses failed to intercept.

Anduril’s interface, Lattice, controls drone defenses, missiles, and autonomous submarines. The company just won a $20 billion Army contract to connect its systems with legacy military equipment. If OpenAI’s models prove useful, Lattice can incorporate them quickly across this broader warfare stack.

The Accountability Problem

Every AI system eventually fails. Models hallucinate, misinterpret ambiguous data, reflect training biases. In commercial applications, failures mean bad customer service. In combat, they could mean strikes on wrong targets or failure to detect actual threats.

Who bears responsibility if an AI-recommended target turns out to be civilian infrastructure? If counter-drone AI misidentifies a friendly aircraft? Current frameworks assign responsibility to human decision-makers who approve AI recommendations. But as AI integrates into time-sensitive combat operations, pressure to accept recommendations without extensive verification increases. When seconds matter for drone defense, there may not be time for thorough human review.

OpenAI’s internal friction is real. Multiple employees have expressed discomfort with military applications, though most comments remain anonymous. The company’s safeguards—no autonomous weapons, no mass surveillance, no high-stakes automated decisions—are enforced through contracts requiring the military to follow its own guidelines. But those guidelines are quite permissive, and OpenAI has limited enforcement ability once models deploy in classified environments.

The Anthropic situation provides cautionary precedent. When Anthropic’s leadership decided their risk tolerance for military applications was too low, the response was swift: presidential order to cease use, supply chain risk designation, contract termination. Commercial AI providers can be cut out of defense work quickly if they don’t align with administration priorities.

OpenAI’s motivations for military engagement remain debated. Maybe it’s revenue—the company spends heavily on AI training and needs income. Maybe Sam Altman genuinely believes liberal democracies and their militaries must have the most powerful AI to compete with China.

What’s certain: OpenAI has decided it’s comfortable operating in combat. The company’s claims about preventing autonomous weapons use rely on trusting the military to follow its own permissive guidelines. Assertions about preventing domestic surveillance depend on enforcement mechanisms OpenAI has limited visibility into once systems deploy in classified settings.

As technology moves from announcement to deployment, abstract questions about ethical boundaries become concrete decisions with irreversible outcomes. The Iran conflict may provide the first real-world test of how OpenAI’s military AI performs under operational pressure. What that test reveals—about the technology, about OpenAI’s red lines, about military AI broadly—will shape the company’s trajectory and the debate about AI in warfare for years to come.

For discussions on AI ethics, military technology, and policy implications, join our WhatsApp community where technologists and policy analysts discuss developments.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.