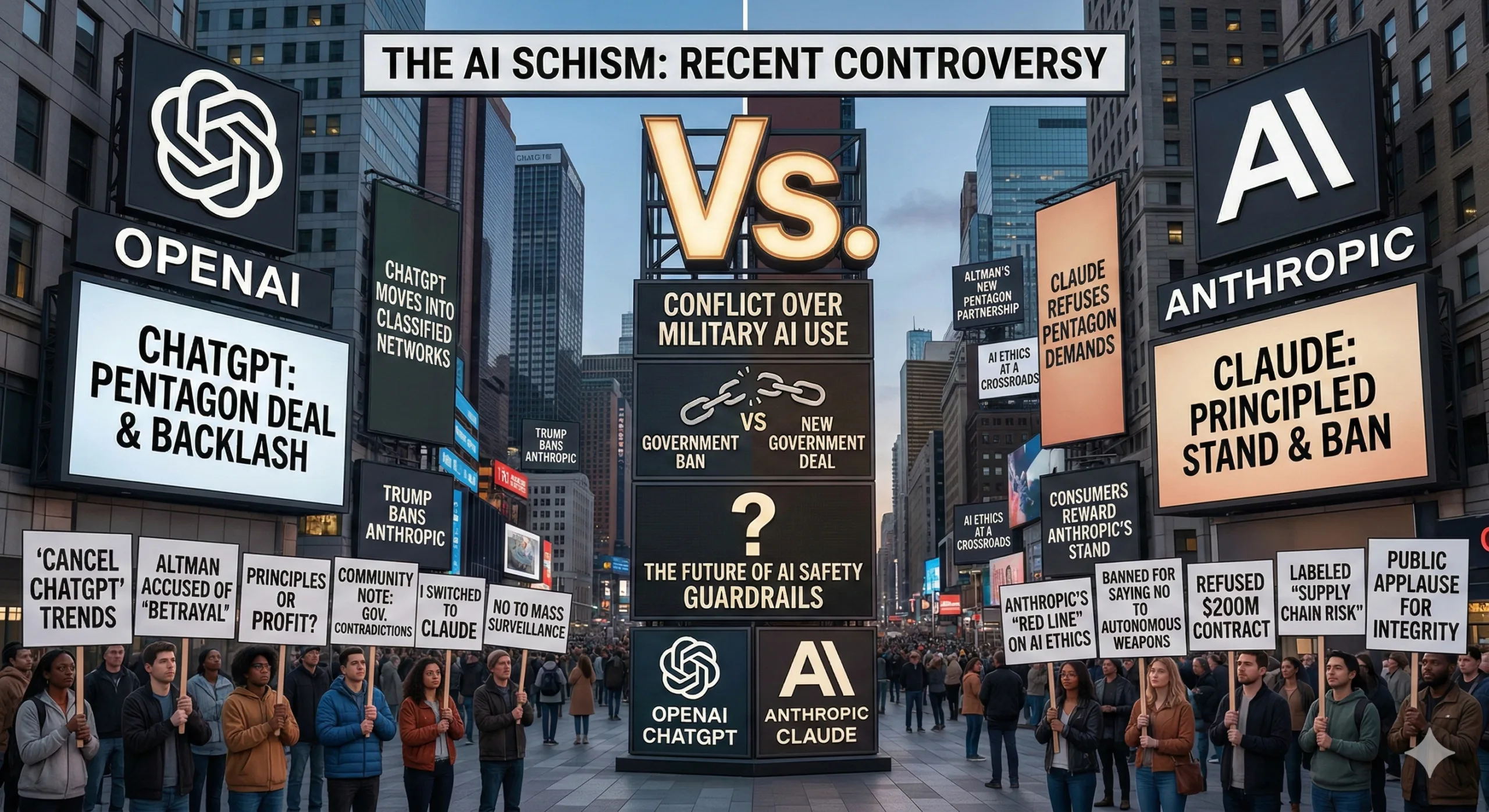

OpenAI just signed a contract to deploy AI systems in classified military environments—and used the announcement to publicly criticize Anthropic’s safety practices. The statement, posted on X (previously Twitter) yesterday, explicitly claims OpenAI’s deployment “has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.” That’s a direct shot at a competitor while the Department of Justice is already investigating Anthropic over national security concerns.

The timing and phrasing matter. When companies announce major contracts, they typically emphasize their own capabilities without naming competitors. OpenAI didn’t just describe its agreement with the “Department of War” (Previously the Department of Defense) it specifically contrasted its approach against Anthropic’s, claiming other labs “have reduced or removed their safety guardrails and relied primarily on usage policies” for national security work.

Then came the real twist:

“We do not think Anthropic should be designated as a supply chain risk and we’ve made our position on this clear to the Department of War.”

This isn’t standard corporate communication. It’s OpenAI publicly stating it told the DOD(Department of Defence) that Anthropic shouldn’t be considered a security risk—which implies someone at DOD is considering exactly that designation.

⚡

WireUnwired • Fast Take

- OpenAI announces DOD contract, explicitly claims “more guardrails” than Anthropic’s deployment

- Statement reveals OpenAI told DOD that Anthropic “should not be designated as supply chain risk”

- Comes as DOJ investigates Anthropic over national security concerns

- OpenAI’s three redlines: no mass surveillance, no autonomous weapons, no high-stakes automated decisions

OpenAI’s stated guardrails establish three redlines: no mass domestic surveillance using OpenAI technology, no directing autonomous weapons systems, and no high-stakes automated decisions like social credit systems. The company claims it protects these through “full discretion over our safety stack,” cloud deployment, cleared OpenAI personnel in the loop, and “strong contractual protections.”

The contrast OpenAI draws is pointed: other labs rely “primarily on usage policies” while OpenAI employs a “more expansive, multi-layered approach.” Usage policies are agreements about how technology will be used. Technical controls are restrictions built into the system itself. The distinction matters because policies can be amended; technical controls require engineering changes.

Whether OpenAI’s approach actually provides stronger protection is debatable. Usage policies combined with contractual terms can be legally binding and enforceable. Technical controls can be sophisticated but also require trust that they can’t be circumvented. OpenAI emphasizes having “cleared OpenAI personnel in the loop,” suggesting human oversight from company staff rather than purely automated deployment—but that also means OpenAI employees would have access to classified military systems.

The public nature of this statement is unusual. Government contracting typically involves confidential negotiations, and companies don’t usually name competitors when announcing deals. OpenAI’s decision to explicitly compare its agreement to Anthropic’s, plus the statement about supply chain risk designation, suggests competitive tensions have escalated beyond normal business rivalry.

Context matters here. The Department of Justice has been investigating Anthropic over national security concerns, though specific details remain unclear. OpenAI’s statement—acknowledging DOD is potentially considering Anthropic a supply chain risk while saying OpenAI opposes that designation—puts these concerns in public view in a way that benefits OpenAI competitively even as it ostensibly defends Anthropic.

Anthropic has not publicly responded to OpenAI’s statement as of publication. The company has previously emphasized its constitutional AI approach and commitment to AI safety, but hasn’t detailed specific arrangements with defense or intelligence agencies beyond acknowledging work in those spaces exists.

For the AI industry, this represents a new phase of competition around national security contracts. As major labs vie for government deployments worth potentially billions in revenue, safety practices and security clearances become competitive differentiators. OpenAI’s decision to make this competition explicit and public—naming Anthropic directly while claiming superior guardrails—marks a shift from private negotiation to public positioning.

User reaction has been harsh.

“You’ve made the deal. Cannot walk it all back now with these pathetic posts and attempts to normalise what you have done,” posted Jason Newton.

“I hope your company goes down the toilet. I cancelled my subscription today. I hope millions of others do the same.”

The comment reflects broader sentiment from users who see OpenAI’s guardrails announcement as damage control after signing a military contract, not genuine safety commitment. Others pointed out the irony in OpenAI’s defense of Anthropic.

“You do realize the Department of War already designated Anthropic a supply chain risk,” wrote Nora Void. “The department of war just shat on your face and you licked yourself clean and called it a day.”

The implication: OpenAI publicly defending Anthropic while DOD has already made its determination makes OpenAI’s statement performative rather than substantive. The backlash highlights OpenAI’s positioning problem. Announcing military AI deployment while emphasizing redlines against autonomous weapons and mass surveillance reads as contradictory to users who question how those restrictions will be enforced in classified environments.

Claiming superior safety practices while naming a competitor during a DOJ investigation strikes many as opportunistic rather than principled. Whether this affects OpenAI’s consumer business remains to be seen. Enterprise and government contracts may outweigh subscription cancellations. But the gap between OpenAI’s public safety messaging and user perception of its actual practices continues widening—something the company can’t easily resolve through statements about guardrails, regardless of how many layers they claim to implement.

For discussions on AI national security, government contracting, and industry competition, join our WhatsApp community where policy analysts discuss developments.

Discover more from WireUnwired Research

Subscribe to get the latest posts sent to your email.